[EBI? – Europe’s a sort of nation!]

I and others have been trudging a lonely path trying to get people to think about managing their data in semantic form. Henry and I have pushed Chemical Markup Language for 17 years and although its takeup is exponential it has a small constant. (Exponential doesn’t necessarily mean explosive). So it’s been very pleasing over the last year or so to see the interest from National laboratories.

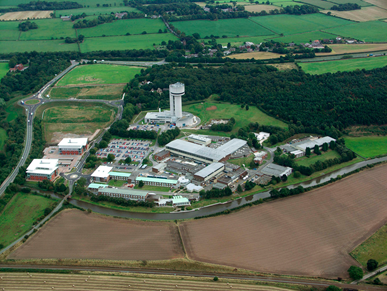

Most countries have national labs. There is always an argument as to whether money is better spent centrally or distributed. Both are right and both frequently run into mismanagement and inefficiency (but we are on Planet Earth so no surprise). National labs are required when you need synchrotrons (here’s Rutherford, STFC with the Diamond synchrotron at the back.) That’s where Cameron Neylon does all his pioneering work on opening up data. That’s where Brian Mathews ran the JISC-I2S2 program for scientific metadata design and workflows that I was part of.

[Images used without permission but I am saying nice things about them]

And Daresbury (where we held our Quixote meeting, thanks to Paul Sherwood) in the linear accelerator tower which has now gone to graceful retirement as a café and seminar building.

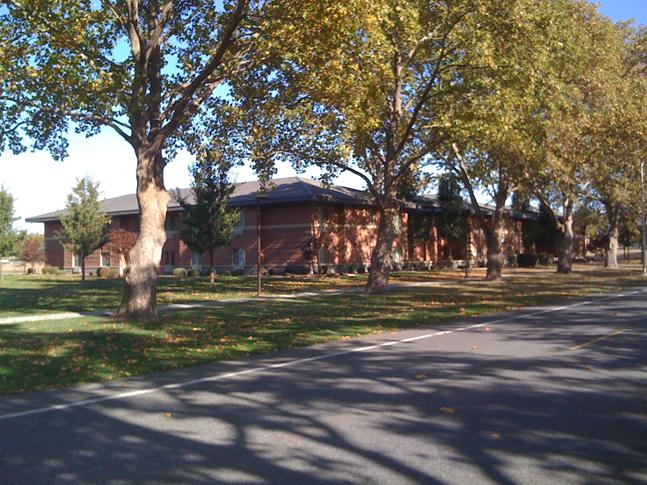

Now I am at PNNL, in gorgeous Washington State (that’s thousands of miles from Washington DC). Here’s the guest house

(Photo courtesy of P. Murray-Rust, CC-BY)

And in February I’ll be in CSIRO, Clayton, Victoria, AU. More of that later.

So why the excitement?

National labs often have facilities (like the synchrotron or ISIS neutron source) which are used by the whole country. They are run efficiently and their primary output is data for the customer scientist. So data management is given a lot of thought and investment. Unlike academia, where you get no credit (yet) for managing data professionally, national labs have a need and a pride to do so.

So it’s not surprising that they are interested in semantic tools for data. And that’s why I have come to PNNL. People have been queuing up to talk We are going to continue to semanticize the data from computational chemistry.

“Semanticize?”

Yes. Suppose you get a program that outputs:

Temp = 298.15

You “know” this is a temperature because 298.15 is a rather special number. It’s 25 Celsius, which is often taken as a standard. Add 273.15 which is 0 Celsius in Kelvin and you get 298.15 K.

But the machine doesn’t know this. It has to be told. So here it is in CML:

<property dictRef=”compchem:temp”>

<scalar units=”unit:k” dataType=”xsd:double”>298.15</scalar>

</property>

Show that to most university chemists and their eyes roll upwards. It’s meaninglessly complex. It takes time and detracts from writing the next grant, getting the next notch on the h-index. But show it to someone in a national lab and their eyes light up. It allows them to manage their data. To search for it. To make sure that it is still fresh in 5 years’ time. That they can export it for others to re-use. Because national labs are not possessive of their data in the same way as academics are (of course this is a generalization, but as an academic I am allowed to mock them).

So over the last few days and the 1.8 remaining I have been getting CML installed here. We’re going to do several things:

- Create semantic output (CML) from NWChem. Ideally this is by adding CML hooks into the code (with FoX)

- Create semantic output for NMR spectra

-

Install a chempound repository. Sam Adams and Jorge Estrada have been hacking this and got it working so it ingests NWChem

Off to edit FORTRAN under CYGWIN! And install Java under Maven. Exciting times.

Do you know whether CML output will likely make it in time for 6.1? The NWChem homepage says (http://www.nwchem-sw.org/index.php/NWChem_6.1) the 6.1 release is expected by the end of the year.

Michael

We are at early stage – I doubt it but we will see how we get on today

Having talked I think the answer is almost certainly no…