This is an occasional series indebted to Hammer House of Horrors. You don’t need to be a chemist to understand the message.

It’s sparked off by a comment from Totally Synthetic in this blog:

A good deal of the reasoning behind transcription of spectral data in publication is to impart meaning to the spectra. The 1H NMR spectrum of rasfonin, for instance, would be indeciferable to me, but the data written in the publication, transribed by the author and annoted for every peak would make (more) sense. It’s great to get an idea what the spectra look like, but more often than not, the actual spectra can be found in the supplementory data as a scan of the original. The combination of these two data sources gives the synthetic chemist everything they need.

Before I get onto the horror, Let me make it very clear that Tot. Syn’s blog is excellent and I’m hoping that he can meet us at the Pub on Monday lunch. His blog is a model of the future of chemoniformatics and we’d like to bounce some ideas off him.

(I’m also not specifically criticising the authors of the paper – at least not more than all other organic chemists because this supporting information (SI) is typical. I am of course suggesting gently that the process of publishing organic chemical experiments is seriously and universally broken).

The supporting information is a hamburger PDF and this example excellently makes my point. (Please readers, read it – or as much as you can manage – as I need help. Especially from anyone who is involved in graphical communication). It’s a separate document from the original paper and even though on the ACS site remarkably seems to be openly viewable. Maybe the ACS will close it sometime or maybe this exercise shows that Openness enhances downloads.

The SI draws the spectra on their sides! This is a clear indication that they aren’t meant to be read on the screen, but printed out. But the SI is 106 pages long. That’s not unusual – we have seen over 200 pages. I am sure that many organic chemists who want to read it will print it out rather than trying to read it on the screen. The spectra run from pp 36-107 with no navigational aids – if you want to link a compound to its spectrum you have to scroll through the spectra till you find its formula. Some compounds are depicted as chemical formulae on the spectra and some, but not all, contain index numbers (bold in the text).

Let’s assume that you are at a terminal and your lab has used up its paper bill. You scroll down to the infrared spectrum of a compound:

It doesn’t look very promising, so I turn my head 90 degrees to look at it. Not very comfortable. So there is a tool on Adobe reader that rotates the page to give:

This is awful. It looks like the spectra I used to collect 30 years ago when the pen plotter was running out (before that we plotted the spectra by hand it’s good for the soul). The resolution is probably 0.1 or better in the x-direction. I have no idea why it is so awful.

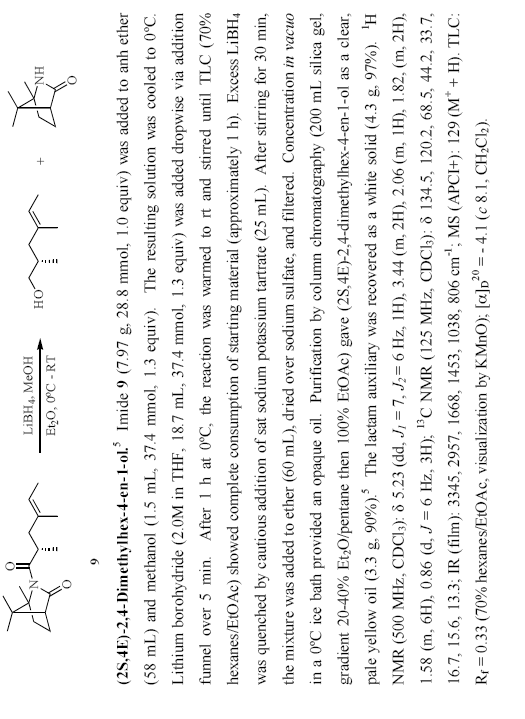

Now we

want to look back to the text where the author has made the annotations (there are no annotations on the spectra so we have to skip back 70 pages) to find:

Our helpful Adobe reader has turnd all the pages round, so we have to turn this one back again. And, I suspect, the only real way to navigate this is to print it out.

The authors obviously spent a lot of time preparing this SI. The publisher probably calls it a “creative work” – you can claim copyright on creative works. I’d call it a destructive work. It doesn’t actually have a copyright notice, although the ACS has a meta-copyright where they assert copyright over all SI (except one from Henry Rzepa and me).

Now – please help me with the PDF. I have blogged earlier about OSCAR – the data extraction tool that can extract massive information from chemical papers in HTML or even Word. But it doesn’t work with PDF. Is there any way of extracting all the characters from this document? If I try to cut and paste I can only get one page at a time? Yes, I could probably hack something like PDFBox. But otherwise PDF is an appalling efficiently way of locking up and therefore destroying information.

The message is simple:

STOP USING PDF FOR SCIENTIFIC INFORMATION

DO NOT USE PDF FOR DIGITAL CURATION

Peter: You want Xpdf – it comes with pdftotext, and it’s GPL.

Xpdf.

– A

(1) Many thanks Andrew. I’ve now tried it out. Given the awful state of PDF it does a pretty good job. But (a) it says it cannot grok PDF1.6 and so uses 1.5 (b) it gets some of the text in the wrong order. I don’t know why this is – perhaps the author added it later. I suspect it hates double columns. So it may be helpful in some cases.

Peter: I think the quality of the spectra isn’t the fault of the PDF per se … blame the scan or the resolution or both.

Also, while PDF navigation isn’t good, it’s better than a completely non-standard proprietary system.

While I agree with the stupidity of double column for screens, that too is not the fault of PDF per se, that’s the document creators fault!

OK, so do I like PDF. No (see this ). But it’s still the best thing for curation until xml AND APPROPRIATE SCHEMA are more fully established

(3) Thanks Bryan – all points taken to heart. I agree it is probably the scanning systems somewhere – but what a system! It is certainly possible to creat a much better document inside the PDF if you try – better images, better text, etc.

I tried runnign this through XPdf – it tried valiantly – and came out with “all of the right words, but not in the right order” (Morecambe and Wise). So the PDF had jumbled the word order.

The real shame is that the W3C has not come up with a compound document format which has gained widepsread acceptance. So the PDF captures most of the components (destroying others) in a single fairly robust aggregation, while HTML has umpteen links which break after downloading. I would put this at the top of my wishlist from W3C. 🙂

P.

(3) Bryan, I have to agree with your reasoning but not the conclusion. A good tool makes it easiest to do the right thing. So whilst PDF makes it possible to create good documents, I think it is entirely reasonable to blame it (or at least Acrobat) for the preponderance of poor quality PDFs.

It’s worth mentioning PDFa at this point – a standardized format of PDF, by and for the archiving community. To all intents and purposes it’s frozen by the standardization process to PDF 1.5 for the foreseeable future. I’ll reserve judgement on PDFa, but it seems to me that a standard that users will find limiting and that frustrates the aims of tool vendors is going to have a rough ride.

(3&4) How about Open Document Format? It does compound objects (nicely) and metadata and is an open standard with a good tool chain. And not being a W3C standard, it has a good chance of being adopted by people outside Massachusetts (although people seem very keen on it there too) 😉

Have you seen PDF2XL ?

They are offering a free licence for academic work until August 2007.

I have tried it out and it works very well in converting columnar data to Excel spreadsheet – as long as the columns are in strcit vertical columns and not ‘jagged’.

Might not be what you are looking for exactly, and not open source, but could be of use. They want people to know about it.

By the way, I am nothing to do with it myself! Just a library assistant interested in open access and related areas.

Tim Gray, Homerton College, Cambidge

Pingback: Unilever Centre for Molecular Informatics, Cambridge - Jim Downing » Blog Archive » How to make a hamburger